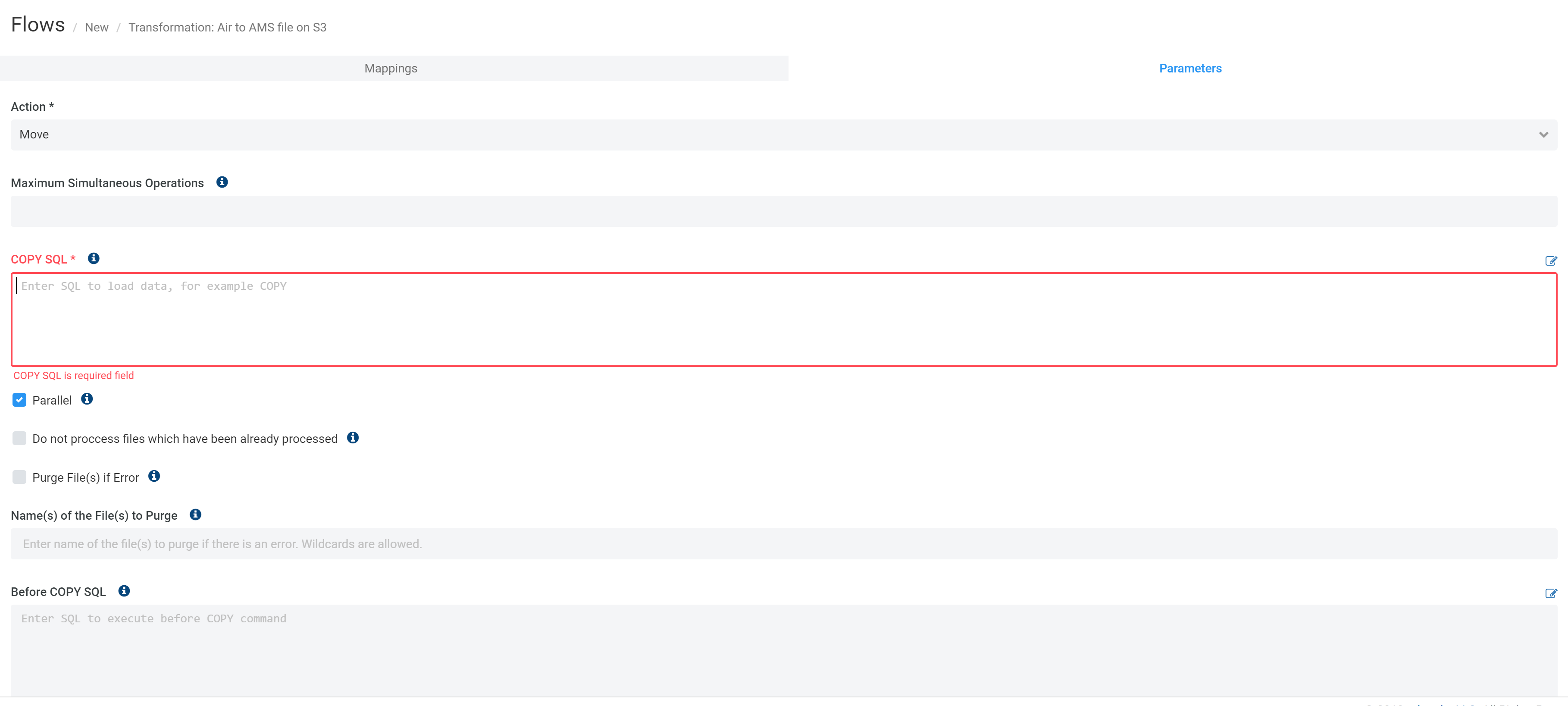

Is there a way to do this? Thank you in advance. You just need to do some tests to gauge the level of slowdown you can accept. You can run multiple copy commands and of course it will affect performance. Concatenating the 500 into 1 uber manifest took between 45-90 minutes. The dynamic file name shall change to August2021_Batch01 & August2021_Batch02 next month and so forth. Redshift COPY of a single manifest took about 3 minutes. The following example uses the NOLOAD option and no rows are actually loaded into the table. Amazon Redshift parses the input file and displays any errors that occur. It is has been running successfully till 31st October. How to copy from s3 to redshift with jsonpaths whilst defaulting some columns to null. There is a code which has been running since 6 months in production, which runs in a loop for given number of tables and does a redshift copy. The copy command will not work in this case because it encounters more columns in the source data than are available in the target table. Stack Overflow is leveraging AI to summarize the most relevant questions and answers from the community, with the option to ask follow-up questions in a conversational format. Batch number ranges 1-6.Ĭurrently, here is what I have which is not efficient: COPY tbl_name ( column_name1, column_name2, column_name3 )įROM 'S3://bucket_name/folder_name/Static_File_Label_July2021_Batch01.CSV'ĬREDENTIALS 'aws_access_key_id = xxx aws_secret_access_key = xxxxx'ĬOPY tbl_name ( column_name1, column_name2, column_name3 )įROM 'S3://bucket_name/folder_name/Static_File_Label_July2021_Batch02.CSV' To validate data files before you actually load the data, use the NOLOAD option with the COPY command. Its the other way round: The target table has fewer columns than the source data at S3. I'd like to modify the below COPY command to change the file naming in the S3 directory dynamically so I won't have to hard code the Month Name and YYYY and batch number.

COPY all the data for a currency into the table leaving the 'currency' column NULL. The import is failing because a VARCHAR (20) value contains an Ä which is being translated into. Since the S3 key contains the currency name it would be fairly easy to script this up. We have a file in S3 that is loaded in to Redshift via the COPY command. File names follow a standard naming convention as " file_label_MonthNameYYYY_Batch01.CSV". Perform N COPYs (one per currency) and manually set the currency column to the correct value with each COPY. I have job in Redshift that is responsible for pulling 6 files every month from S3.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed